How to Choose the Best AI for Coding (ChatGPT vs Gemini vs Claude)

I’ve been using ChatGPT since the early beta, and I’ve added Claude and Gemini into my workflow as they came out. As of December 7, 2025, I’ve spent a lot of time asking all three to write code, debug real projects, refactor systems, and even work on entire repos.

Short version of my experience:

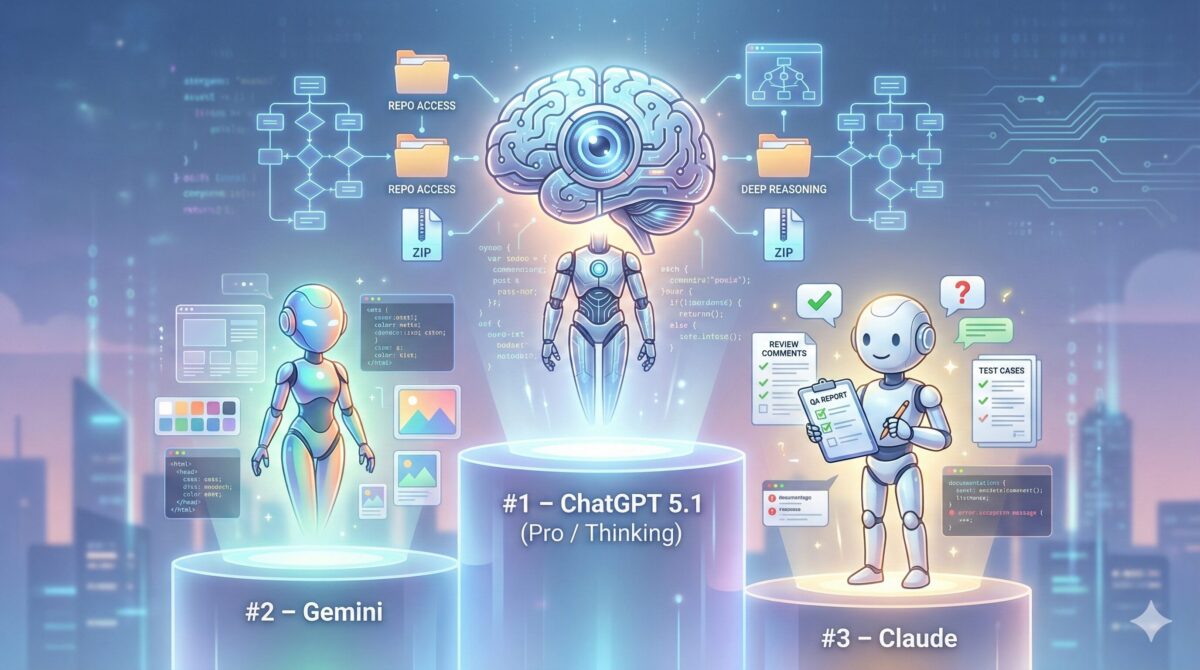

- ChatGPT 5.1 is still the strongest AI for coding overall.

- Gemini is catching up fast and is already better for front-end/CSS and images.

- Claude is the weakest of the three for coding, but still useful in a narrow QA role.

- And tools like Builder.io can sit on top of these models and make all of them more powerful for real projects.

This is a personal, hands-on comparison, not a benchmark lab test. I’ll walk through how each model behaves in real coding work and how a tool like Builder.io can supercharge them.

TL;DR – My ranking for coding right now

If you don’t want all the details, here’s how I’d summarize it today:

- #1 – ChatGPT 5.1 (Pro / Thinking)

Deep reasoning, understands complex systems, can work across whole repos and ZIP files, and actually plans before coding. Best “pair programmer”. - #2 – Gemini

Improving quickly, cleaner code style, better for CSS / design / images. But still behind ChatGPT on complex backend logic and real-world reliability. - #3 – Claude

Struggles with complex relationships and larger systems, limited file handling. I’d use it mostly as a QA reviewer / second opinion, not my main coding AI. - Bonus – Builder.io

Not a model itself, but a tooling layer that can connect to ChatGPT, Claude, Gemini, Figma, repos, MCP servers, etc. If you’re serious about using AI in a real dev stack, it’s a great way to turn raw models into an actual workflow.

How I’m judging “best AI for coding”

To keep this honest and useful, here’s what I care about when I say “best”:

- Understanding messy, real tasks

Can it figure out what I meant, not just what I literally typed? - Deep reasoning

Does it catch edge cases I forgot? Does it plan before writing? - Repo / multi-file support

Can it handle a whole ZIP or repo and understand how files relate? - Debugging & refactors

Is it good at reading errors, tracing logic, and suggesting minimal fixes? - Hallucinations

Does it invent APIs, break logic, or confidently lie? https://cloud.google.com/discover/what-are-ai-hallucinations - Front-end & visuals

CSS, layout, design help, and image/video generation. - File limits + UX

How many files can I send? How annoying is the UI?

With that in mind, let’s go through each model.

ChatGPT 5.1 – The smartest and most reliable for coding

Right now, ChatGPT 5.1 is my default coding assistant.

1. Deep understanding and visible reasoning

The biggest difference with ChatGPT 5.1 (especially the “Thinking” / Pro experience) is how deeply it understands the task.

When I give it a coding prompt, it doesn’t just spit out code. It thinks:

You can literally see this in the side reasoning panel:

- “The user asked me to do X…”

- “But I need to check Y first… checking…”

- “I should come up with a plan… planning…”

- “Now I’ll write the code and then explain it… writing code… writing explanation…”

Even when my prompt is vague, it often:

- fills in missing pieces

- identifies edge cases I didn’t mention

- suggests extra checks or validations I didn’t think of

That makes a huge difference in real-world coding. It feels less like “code autocomplete” and more like talking to a senior dev who actually thinks before typing.

2. Handling ZIP files and complex systems

One of ChatGPT’s killer features for coding is how it handles ZIP files:

- I can upload a ZIP with multiple code files.

- ChatGPT unzips it, reads every file, and builds a mental model of the system:

- how modules relate

- what each file does

- which parts are core vs supporting

It can’t “run” the code, but by reading it, it can still:

- explain the overall architecture

- find where a bug is likely to be

- suggest changes across multiple files

Then I can ask it to:

- modify the code across several files

- bump versions

- re-zip everything into a new archive

- and give me a download link to the updated ZIP

That workflow is huge. It means I can say:

“Here’s my whole mini-project. Fix X, refactor Y, update the version and give me a new ZIP.”

And it actually does it without me manually patching random snippets together.

Other AIs either:

- don’t handle ZIPs at all

- or feel very limited with multi-file understanding

So in terms of complex system understanding, ChatGPT is way ahead for me.

3. Tips for using ChatGPT effectively for code

A few things I’ve learned:

- Be detailed when you can.

The more clear you are about the input, output, constraints, and environment, the better the result. - Ask for full code, not “snippets plus TODOs”.

By default it sometimes says things like: “Add your function here…”

I usually append:

“Give me the complete code, no TODOs, no ‘implement X here’ placeholders.” - Watch out for very long files.

When you ask for huge files, the chat can slow down or get heavy. Splitting large tasks into logical chunks can help. - Use it as a system-level editor.

Hand it the project in a ZIP, tell it what to change across files, then let it handle the boring work.

4. ChatGPT Codex: GitHub-connected, but read-only

There’s also ChatGPT Codex (https://chatgpt.com/codex) which can connect to GitHub. That’s extremely useful for:

- reading your repo

- understanding project structure

- helping with refactors and reviews

But right now, it’s read-only – it can’t push commits directly. You still have to:

- apply changes locally

- or copy/paste / patch yourself

Even with that limitation, it’s still a strong tool when paired with ChatGPT’s reasoning.

5. Where ChatGPT is weak: CSS, design, and images

The main weak spots I’ve hit:

- CSS / design / UI feels basic.

It can write usable CSS, but:- visual design ideas are often generic

- complex layouts sometimes need more iteration

- it’s not the best for “pixel-perfect” front-end work

- Images / video

Tools like Sora (https://sora.chatgpt.com/library) exist, but for pure design and image quality, I’ve seen better from other tools.

So: ChatGPT is my backend and logic powerhouse, and my repo editor, not my main UI/UX or image generator.

Gemini – Cleaner code, better CSS & images, but still behind for logic

Gemini is moving fast. You can feel it getting better almost month by month.

You even see it in things like the December 4, 2025 Google Workspace announcements (Workspace Studio, agents, etc.) – Google is clearly investing heavily here.

But today, when we talk specifically about coding, I’d still say:

Gemini is good, improving quickly, but not yet at ChatGPT level.

1. Where Gemini is behind ChatGPT for coding

A few pain points I’ve run into:

- File and context limits

You’re more limited in how many files you can upload and how much of a system Gemini can see at once, no ZIP file. - Complex system understanding

It struggles more with:- multi-file logic

- complex relationships between modules

- bigger architectures

- Hallucinations

This is a big one:

When Gemini doesn’t know, it often invents things:- fake functions

- non-existent APIs

- code that completely breaks the logic

All AIs hallucinate sometimes, but with Gemini I see it more, especially on non-trivial tasks.

2. Where Gemini is actually better than ChatGPT

It’s not all negative though. I’ve found Gemini better than ChatGPT in a few areas:

- CSS and front-end design

Gemini’s CSS, layout ideas, and UI structure often feel:- cleaner

- easier to understand

- more “front-end friendly”

- Code style

The code it generates is usually:- very clean

- well-formatted

- easy to read and follow

- Images / video

For images and video, Gemini is extremely strong. In particular:- tools like Nano Banana (for images/video) are, in my experience, one of the best generators out there right now

So my pattern is:

- Use Gemini for:

- CSS help

- UI layout ideas

- visually-related tasks

- clean example code

- Use ChatGPT for:

- serious backend work

- complex refactors

- large systems and repos

- anything where correctness matters more than “pretty code”

3. The trajectory: Gemini might catch up

One important thing:

Gemini is updating frequently and the improvements are significant.

If Google keeps that pace, I wouldn’t be surprised if Gemini reaches or even matches ChatGPT’s level for coding in the future.

But right now, if I had to choose one coding brain, I’d still choose ChatGPT.

Claude – Not great at coding, but still has a niche

Claude is the weakest of the three for coding in my experience.

1. Main issues I’ve seen

- Can’t handle complex relationships well

It struggles when the logic spans many files or multiple layers of abstraction. - File limits are restrictive

You’re limited in how much you can upload, and:- you can’t upload ZIPs like with ChatGPT

- working across a whole project is much harder

- Poor CSS/design and no real image game

For CSS, layout, and visuals, Claude is basically not in the race for me.

It also doesn’t compete on images or video.

2. Where Claude is still useful

For me, Claude’s role is:

QA assistant / second opinion.

I’d use it to:

- read through code and comment on clarity

- help with documentation

- suggest test cases or edge cases

- give a different explanation of a concept

But as a primary AI coding assistant, it’s firmly behind ChatGPT and Gemini.

Side-by-side comparison: ChatGPT vs Gemini vs Claude for coding

Here’s how I’d compare them by category, based on real use:

Understanding complex systems & repos

- ChatGPT – Best by far; can unzip large projects, read many files, understand relationships, and make multi-file changes.

- Gemini – Struggles more with complex systems; file limits get in the way.

- Claude – Weakest here; doesn’t handle big multi-file setups well.

Reasoning depth

- ChatGPT – Strong visible reasoning (planning steps, checking, then coding). Feels closest to a senior dev.

- Gemini – Reasoning is improving but still more brittle on tricky logic.

- Claude – Good at natural language reasoning, weaker on code reasoning.

Hallucinations

- ChatGPT – Can hallucinate, but less often on well-scoped coding tasks.

- Gemini – Hallucinates a lot more in code; invents APIs and breaks logic.

- Claude – Can hallucinate too, but I see it mainly as a reviewer, not a primary coder.

CSS, UI, design, and images

- ChatGPT – Basic. Acceptable CSS, but not impressive; images are overshadowed by other tools.

- Gemini – Best here, especially combined with tools like Nano Banana. Front-end help is cleaner.

- Claude – Very weak in this area.

File handling & UX

- ChatGPT – ZIP uploads, multi-file context, codex integration (read-only GitHub) – overall the best tooling.

- Gemini – More limited in file number/size; harder to do whole-project work.

- Claude – No ZIPs, tighter limits; cumbersome on bigger tasks.

Where tools like Builder.io fit in (AI “amplifier” for coding)

So far we’ve talked about models. But in real projects, you usually need a tooling layer on top of the models to make them truly useful.

That’s where something like Builder.io comes in.

1. Builder.io is not “another AI”, it’s an integration platform

Builder.io isn’t an LLM like ChatGPT, Gemini, or Claude. It’s more like:

- a visual builder / headless CMS / design-to-code toolkit

- that can connect to multiple AIs (ChatGPT, Claude, Gemini) and other services:

- Figma

- your repos

- MCP servers

- etc.

It basically wraps these models in real workflows.

2. Example: Figma → Builder.io → AI → code

A really powerful pattern is:

- Start in Figma for design.

- Use Builder.io’s Figma integration to pull that design into Builder.

- From Builder.io, plug into ChatGPT / Gemini / Claude to:

- help turn the design into clean components

- adjust layout and structure

- generate responsive variants / code snippets

This is much more practical than just saying “here’s a Figma screenshot, write me a React app” in a raw chat.

3. Example: Connecting Builder.io to repos and MCP servers

Because Builder.io can connect to things like:

- repos

- MCP servers

- other runtime tools

You can do more serious stuff like:

- tie your generated UI into a real backend

- keep your code in sync with a live project

- let AI make suggestions that are anchored to the actual source of truth (your repo / components library), not just random boilerplate

In other words, if you’re serious about using AI for coding, a tool like Builder.io:

- takes the strengths of ChatGPT / Gemini / Claude,

- adds structure, integrations, and guardrails,

- and turns them into something you can actually ship with.

4. How I see the roles

In my head, the roles look like this:

- ChatGPT – brain for logic, architecture, refactoring, big changes.

- Gemini – helper for front-end, CSS, and visuals.

- Claude – optional QA / documentation reviewer.

- Builder.io – the glue that connects:

- designs (Figma)

- code (repos, components)

- AI (ChatGPT/Gemini/Claude)

into one workflow you can really build products with.

So… what’s the best AI for coding in 2025?

If I had to answer the main question directly:

What’s the best AI for coding right now – ChatGPT, Gemini, or Claude?

My honest answer is:

- Primary pick:ChatGPT 5.1

- Best overall reasoning

- Best at understanding and editing entire systems

- ZIP + repo workflows are far ahead

- Secondary pick:Gemini

- Great for CSS, visuals, and clean example code

- Improving fast and might catch up, but not there yet for complex logic

- Niche pick:Claude

- Mostly useful as a QA / review assistant, not my main coder

- Tooling layer:Builder.io

- If you’re serious about AI and coding, pairing these models with a platform like Builder.io (Figma + repo + MCP integrations) makes your setup much more powerful and practical.

If you’re only going to invest time deeply in one AI coding assistant today, I’d still say:

Start with ChatGPT 5.1, keep an eye on Gemini’s rapid improvements, and use tools like Builder.io to turn all of this into an actual end-to-end workflow.

You’ll get the best mix of raw AI power and real-world productivity.

FAQ – ChatGPT vs Gemini vs Claude for Coding

Right now, ChatGPT 5.1 is still my main pick for coding. It understands complex tasks deeply, plans its steps, and can work across multiple files or even a full ZIP of your project. That makes it feel like a real pair programmer, not just a code autocomplete.

Gemini is improving fast and already does a great job with CSS, layout, and visuals. Its code style is usually very clean and readable. But when it comes to hard logic, multi-file changes, and not hallucinating APIs, ChatGPT is just more reliable for me. So in a direct ChatGPT vs Gemini for coding comparison, I still choose ChatGPT as the primary tool and use Gemini more as a front-end/design helper.

For me, no. Claude is usable, but it’s clearly the weakest of the three for coding. It:

– struggles with complex relationships in larger systems,

– has tighter limits on how much code you can upload,

– doesn’t support ZIP uploads the way ChatGPT does,

– and is very weak on CSS/design and images.

Where Claude is useful is as a QA / reviewer: reading code, helping improve documentation, suggesting test cases, and giving second opinions on logic. I wouldn’t trust it as my primary “write and refactor my whole project” assistant, but I don’t mind using it as an extra pair of eyes.

This is a big one, and it doesn’t have a one-size-fits-all answer. A few general rules I follow:

– Check your company policy first. Some teams ban pasting proprietary code into any external AI.

– Prefer paid / enterprise plans with clearer data handling policies over completely free consumer accounts.

– For truly sensitive pieces (security logic, proprietary algorithms), be extra careful or keep them out of the prompt.

– If you’re going to use AI heavily on a real repo, consider combining it with a tool like Builder.io or other platforms that integrate with your stack and have clearer controls around where your data goes.

Bottom line: technically you can paste code, but you shouldn’t do it blindly. Treat it like sending code to an external contractor – you need to know the rules before you do it.

Free tiers are great for:

– trying each model,

– generating small snippets or simple scripts,

– seeing whether you like the “feel” of ChatGPT vs Gemini vs Claude.

But for serious coding, I’d say paid is almost required because you get:

– more context (larger prompts, more code at once),

– better models (like GPT-4/5.1 instead of older ones),

– higher rate limits,

– and often better integration options (GitHub, plugins, etc.).

If you’re actively building or maintaining projects, the jump from a free basic model to something like ChatGPT 5.1 Pro is huge. You’ll feel it especially when working with whole repos, refactors, and debugging complex issues.

If you’re just playing around or writing tiny scripts, you can live inside the chat window. But if you’re serious about using AI for real products, I think something like Builder.io is a big upgrade:

– It connects to Figma, so you can bring real designs in and not just describe UI in text.

– It can hook into repos, components, and MCP servers, so AI suggestions are tied to your actual codebase, not random boilerplate.

– It gives you a visual and structural layer on top of the models, which makes it easier to keep everything consistent and shippable.

In other words: ChatGPT, Gemini, and Claude are the brains; a tool like Builder.io is the body and skeleton that lets those brains actually build something coherent in a real project. If you’re going beyond experiments and into production work, that extra layer is worth it.